【富华保险】DolphinScheduler技术栈

DolphinScheduler介绍

DS是什么?

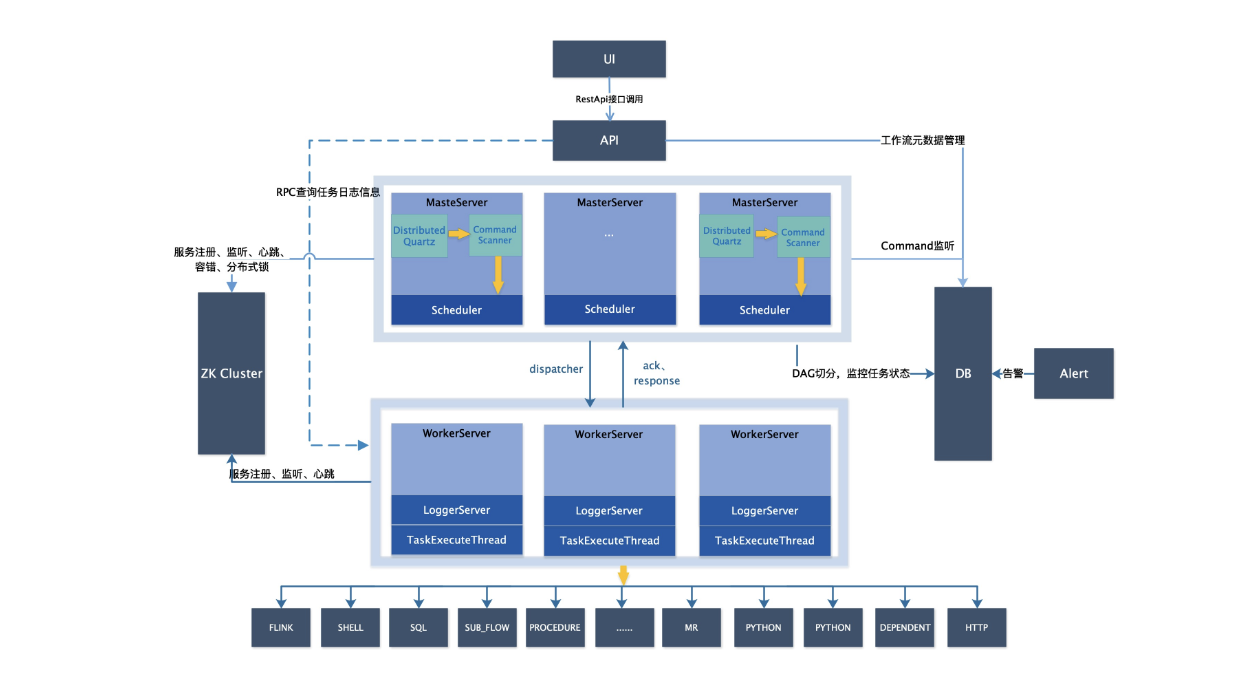

DolphinScheduler是分布式的工作流调度工具平台,是以DAG组织任务流,具有资源监控,任务监控、定时调度,可以调度30+类型的任务

开源官网

DS架构

集群角色

- MasterServer: ds的主节点角色

- WorkerServer:ds的工作节点角色

- ApiApplicationServer:提供API接口服务的角色

- AlertServer:提供告警服务角色

- LoggerServer:提供日志服务角色

DS配置

必装软件:

- zookeeper

- mysql

- hadoop

修改配置:

- common.properties

- datasource.properties

- install_config.conf

- dolphinscheduler_env.sh

修改内容:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41# /export/server/dolphinscheduler/conf/common.properties

fs.defaultFS=hdfs://up01:8020

yarn.application.status.address=http://up01:8088/ws/v1/cluster/apps/%s

# /export/server/dolphinscheduler/conf/datasource.properties 不需要修改,确认

# postgresql or mysql

# /export/server/dolphinscheduler/conf/config/install_config.conf

dbtype="mysql"

dbhost="192.168.88.166:3306"

username="root"

dbname="dolphinscheduler"

password="123456"

zkQuorum="192.168.88.166"

installPath="/export/server/dolphinscheduler"

deployUser="root"

resourceStorageType="HDFS"

defaultFS="hdfs://up01:8020"

singleYarnIp="up01"

resourceUploadPath="/dolphinscheduler"

apiServerPort="12345"

ips="up01"

sshPort="22"

masters="up01"

workers="up01"

alertServer="up01"

apiServers="up01"

# /export/server/dolphinscheduler/conf/env/dolphinscheduler_env.sh

export HADOOP_HOME=/export/server/hadoop

export HADOOP_CONF_DIR=/export/server/hadoop/etc/hadoop

export SPARK_HOME2=/export/server/spark

export PYTHON_HOME=/root/anaconda3/envs/pyspark_env

export JAVA_HOME=/export/server/jdk1.8.0_241

export HIVE_HOME=/export/server/hive

export PATH=$HADOOP_HOME/bin:$PYTHON_HOME/bin:$JAVA_HOME/bin:$HIVE_HOME/bin:$SPARK_HOME2/bin:$SPARK_HOME2/sbin:$PATHds的环境变量配置

最好不要配置

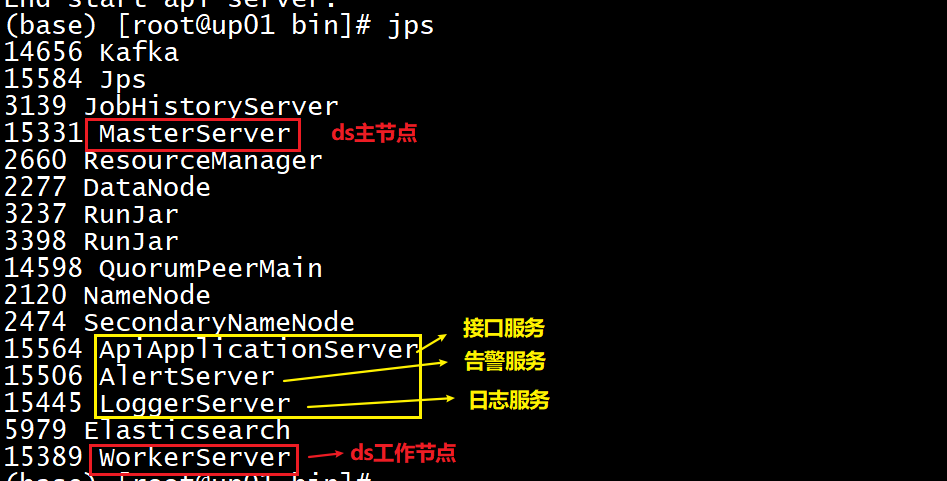

DS启动

启动海豚服务

前提:先启动hdfs 和 zookeeper

一键停止集群所有服务 sh ./bin/stop-all.sh 一键开启集群所有服务 sh ./bin/start-all.sh 单独停止和启动命令: sh ./bin/dolphinscheduler-daemon.sh start master-server sh ./bin/dolphinscheduler-daemon.sh stop master-server sh ./bin/dolphinscheduler-daemon.sh start worker-server sh ./bin/dolphinscheduler-daemon.sh stop worker-server sh ./bin/dolphinscheduler-daemon.sh start api-server sh ./bin/dolphinscheduler-daemon.sh stop api-server sh ./bin/dolphinscheduler-daemon.sh start logger-server sh ./bin/dolphinscheduler-daemon.sh stop logger-server sh ./bin/dolphinscheduler-daemon.sh start alert-server sh ./bin/dolphinscheduler-daemon.sh stop alert-server1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

启动后,确认5个进程都在

### <font size=4 color=6699ff face='华文楷体'>访问:</font>

- http://up01:12345/dolphinscheduler

- 用户名:admin

- 密码:dolphinscheduler123

## <font size=5 color='orange' face='华文楷体'>DolphinSchedulerWeb解析</font>

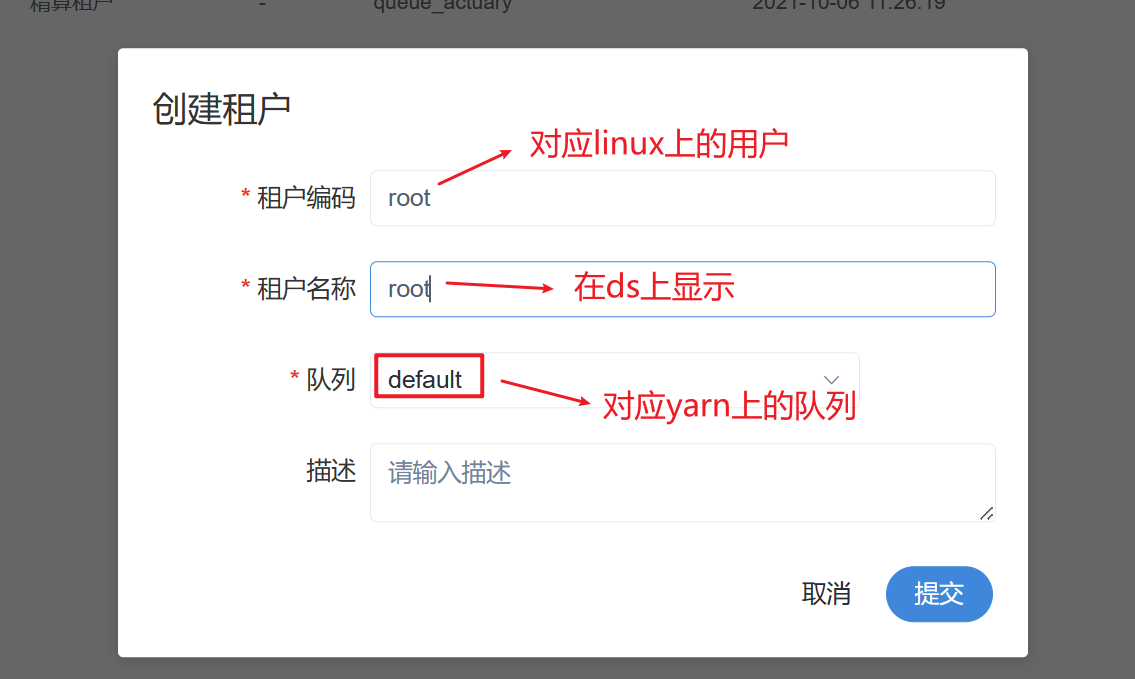

### <font size=4 color=6699ff face='华文楷体'>租户的概念</font>

- 租户对应linux系统中用户

- 直接使用linux系统的较高权限的用户,作为租户,创建租户

- 给linux系统设置添加用户和设置较高权限-https://blog.csdn.net/weixin_40816738/article/details/103920528

- 用户-ds的权限控制

- 资源中心-上传文件或文件夹的入口

- 数据源中心-配置数据源连接

- work组-配置可用工作节点入口

- 令牌-基于DS API代码开发

- Api-http://up01:12345/dolphinscheduler/doc.html?language=zh_CN&lang=cn

## <font size=5 color='orange' face='华文楷体'>DS的使用</font>

### <font size=4 color=6699ff face='华文楷体'>DS使用</font>

- 打包pyspark虚拟环境并上传HDFS

cd /root/anaconda3/envs/pyspark_env/

zip -r pyspark_env.zip ./

hdfs dfs -mkdir /env

hdfs dfs -put pyspark_env.zip /env

1 |

|

- DS配置相关参数

1 | --archives hdfs://up01:8020/envs/pyspark.zip#pyspark_env \ |

All articles in this blog are licensed under CC BY-NC-SA 4.0 unless stating additionally.